Participating media, AKA fog/steam/smoke is one of those things that can help make a scene look real but also is crazy hard to render as it involves calculating how light bounces around within the media.

id Software decided to add fog volumes to the idTech 3 engine, so we must add them back, but since this whole project is about physically accurate rendering, we want the real deal, physically correct fog with all its light interactions, no old school hacks, no alpha tricks, so let's talk about some new hacks and tricks.

Warning: the algorithm that I'm about to describe is cursed, if you use it Monte Carlo's lawyers will send you cease and desist letters, light transporters will demonstrate in front of your house, the photon mapping guild will disown you, you've been warned.

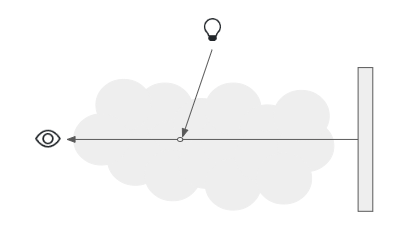

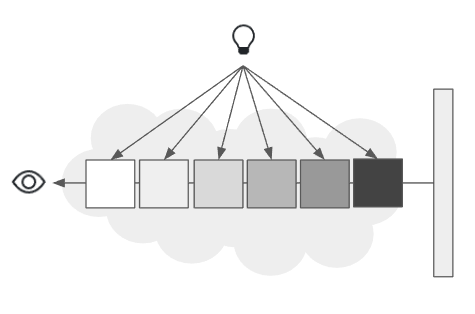

First of all, let's talk about the problem we are facing. Participating media involve some light interacting with particles and said light been absorbed or scattered, we need to know how much light is in-scattered in the camera's direction, here's a typical diagram of how that looks.

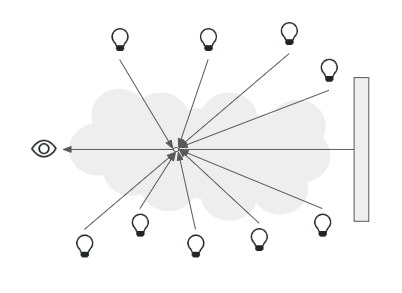

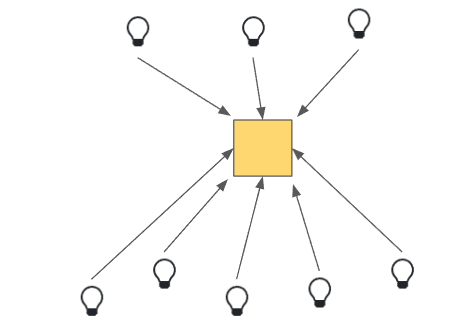

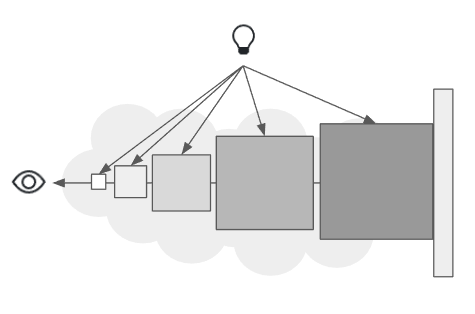

The thing here is that there is only one light in that diagram and we are facing a slightly more complex problem, here's an updated diagram:

Waaay too many lights in an area, and although there is a list of potentially visible lights, many of them are completely or partially occluded. To deal with visibility tutorials/blog posts/videos usually suggest using shadow maps, well, guess what, we don't have those. We also have dynamic lights, you know, storm troopers are usually shooting at the player with their blasters. I guess the only upside here is that we are dealing with homogeneous media, that means the particles within our volume are evenly scattered. I'm happy they didn't add back then some crazy smoke plume or something similar.

Of course we want interactive frame rates, the player should be able to move around, so we can't really stay still to accumulate anything.

Our requirements are:

- Physically accurate fog rendering

- Thousands of lights, static and dynamic

- No shadow maps

- Interactive frame rate

First things first, since we want physically accurate fog, we will need math, I'm truly sorry.

The following equation deals with the light in-scattered along a ray of origin o and direction d that travels through a volume S distance [1][2]:

Where T is the transmittance function, σs is scattering coefficient ,f𝜌 is the phase function and Li is the incoming light, since we are dealing with homogeneous media, scattering is a constant and our transmittance equation is [2]:

Where σ_ext is extinction coefficient, our equation finally looks like:

Oh noes, 2 integrals, don't worry, we'll deal with both. The inner integral sums up all light interactions on a particle and how much of that light is in-scattered in the direction of our ray. Of course along our ray there will be a huge amount of particles and we need to sum all those contributions, and that's what the integral on the outside actually does.

Let's deal with the integral along the ray first, it's the boring one. There are millions of particles in the path of our ray and we can't just add them up, remember interactive frame rates, so we need to approximate the value of it, to do that we will use a Riemann sum, which basically means that we move along the ray in small steps, at each step we take a sample particle, evaluate it's light contribution and multiply that by the length of our small step and at the end add the values of all our steps. Yes, I described ray marching, how original [2].

But don't leave just yet, the other integral is where the fun begins, it tells us that the in-scattered light in a certain direction is the result of adding up all the light interactions on a particle. Since we have possibly hundreds of lights and none of them have a shadow map, how do we do it? We will approximate the value using Monte Carlo integration, so our equation looks like:

Now we need a strategy to get some light samples and their PDF. We can't directly sample that function but we can use Resampled Importance Sampling (RIS) where we use a cheap p(x) function to sample a more complex p̂(x) one, we do that and draw a good sample, then for visibility we could shoot a shadow ray, but those are very expensive and the idea of interactive frame rates goes out the window, so we need something else.

What if we want to share good samples among rays? And if we have good samples, could we reuse them across frames? At this point you are certain that I'm about to bring up reservoirs[5][6], and yes, that's what we will use, but instead of using ReSTIR, we will use ReGIR[3], let me explain why.

In ReGIR we use a world-space grid, and in each voxel we store some reservoirs that represent light samples, these light samples are likely to contribute more to a certain area or volume in our case. As with any form of reservoir, we need a p̂(x) function, in this particular it is the incoming radiance to the voxel, that's it, although this might be a sub-optimal function, we can use it to resample a better p̂'(x) function. When calculating the incoming radiance remember to take into account the transmittance of the medium. Testing for visibility each sample is paramount, it will really help alleviate the problem of visibility as the number of needed shadow rays is greatly reduced and we can consider the samples within the voxel to be reasonably visible.

Another clear advantage of using ReGIR is that as we update those samples each frame, dynamic lights will make it into those voxels and will be discarded if they either disappear or are too far from the voxel. Also the samples within a voxel are independent of the camera movement, to a certain degree as we will discuss later on, and can be resampled each frame to bring in high quality and stable results. This is just an overview of why we want to use ReGIR, for more details on how it can be implemented check Chapter 23 of Ray Tracing Gems Vol 2 [3].

One thing that ReGIR doesn't specify and that we get to choose is the kind of grid that we will use, for that I found the adaptive hash-grid that Guillaume Boissé describes in his paper [4] quite convenient, voxels are small close to the camera and get larger as they are farther away, of course moving the camera changes the size of voxels.

This means we get greater detail up close and coarser the farther we are from the camera, using our shadow rays where they matter most.

Also, if you think about our transmittance function, we get exponentially less light the farther we move on our ray, so we can adjust the size of each step based on the size of the voxel we are at, taking larger steps and reducing memory accesses and computation, and adaptive ray marching if you will. It would look something like this:

I told you there would be hacks. Now let's go back to the function we are trying to sample:

Where Li is the incoming light, ,f𝜌 is a phase function which could be isotropic scattering or something like the Henyey-Greenstein phase function. So using our ReGIR reservoirs within the voxel we are at, we create a new reservoir and stream them to sample this better p̂'(x) function using the following weight[5]:

Where r_regir is our ReGIR reservoir, p̂'(x) is the function we are resampling. Once we do that, we can calculate the contribution weight W and use it along our sample [5] to estimate the integral we need.

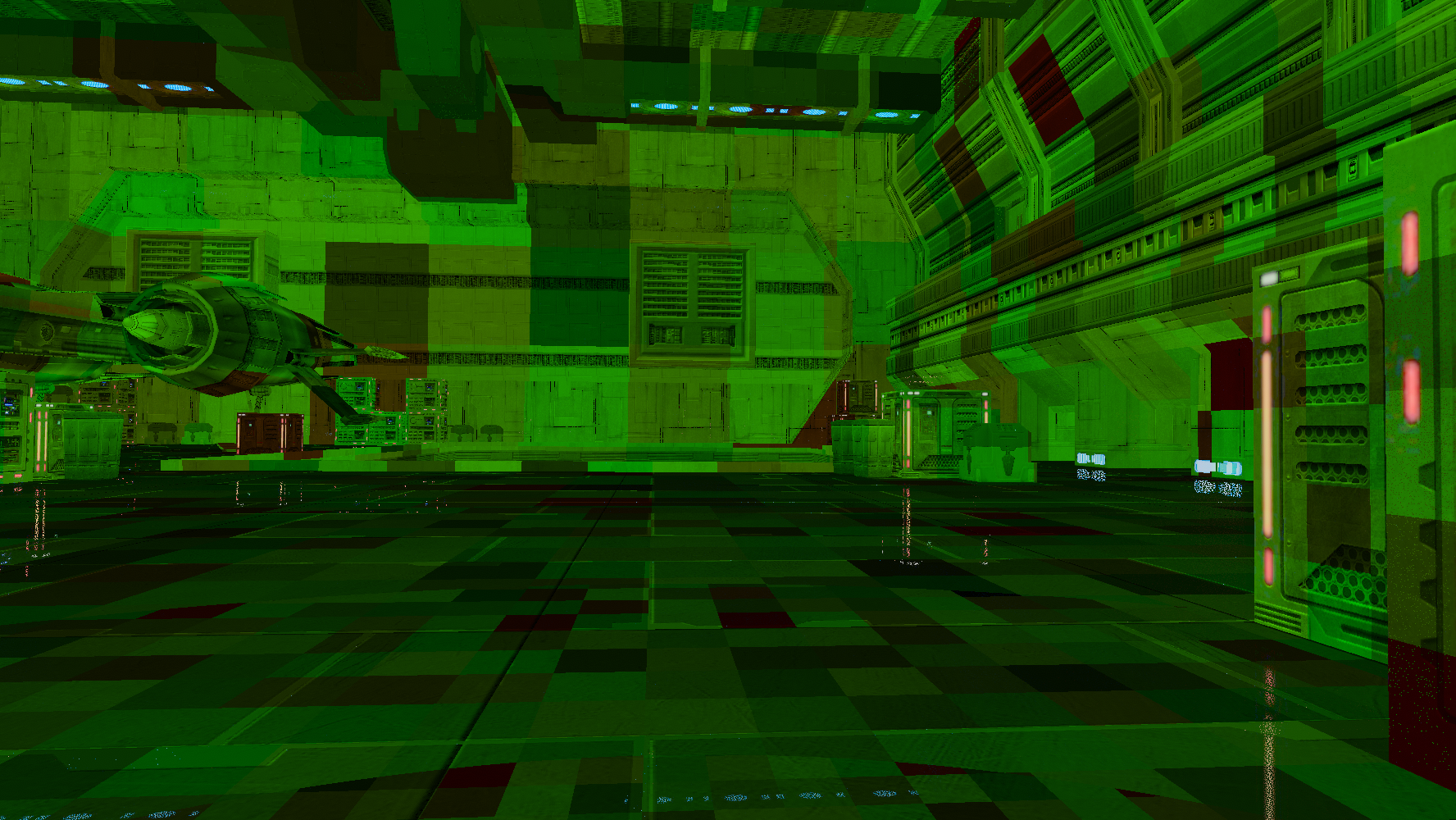

Recap, we will use an adaptive hash-grid that we will fill with ReGIR light samples, we will ray march through said grid and use the light samples to estimate the in-scattering of a sample on each step, the steps will increase in length proportional to the size of a voxel. How does it look?

Well, we can see the voxels and it doesn't look very nice, what are we missing? Jittering! But not only the distance within the step but also try to get neighboring voxels.

Does it improve?

Looks much better, quality can be improved depending on how you fill the grid, in this particular, as you can tell, I'm skipping several voxels. It gives the volume a look and feel of a heterogeneous volume, so I'm gonna leave it as is and call it artistic choice.

Will a phase function work correctly? Yes.

Also adjusting the extinction coefficient.

Denoising? 3x3 Gaussian blur ¯\_(ツ)_/¯(Didn't use it for most of the screenshots though).

What are the downsides of this technique? Since visibility is related to whether those light samples are visible to the voxel and it can be big, we don't get those sharp God Rays/light shafts/etc, it all looks soft, to fix that I guess you could substantially reduce the size of the steps when ray marching and actually shoot a shadow ray to check for visibility. Also this only covers direct lighting, indirect lighting within the volume is not covered here. Aaand jittering might bring in light leaking.

Is it all worth it? Here are some screenshots of how the whole thing looks like.

Footnotes

[1] Ray Tracing Gems, Chapter 28 "Ray Tracing Inhomogeneous Volumes"

[2] Monte Carlo methods for volumetric light transport simulation

[3] Ray Tracing Gems II, Chapter 23 "Rendering many lights with grid-based reservoirs"

[4] World-Space Spatiotemporal Reservoir Reuse For Ray-Traced Global Illumination

[5] Spatiotemporal reservoir resampling for real-time ray tracing with dynamic direct lighting

[6] How to add thousands of lights to your renderer and not die in the process